Agent Skills as a Strategic Capability in Process-Driven Organizations

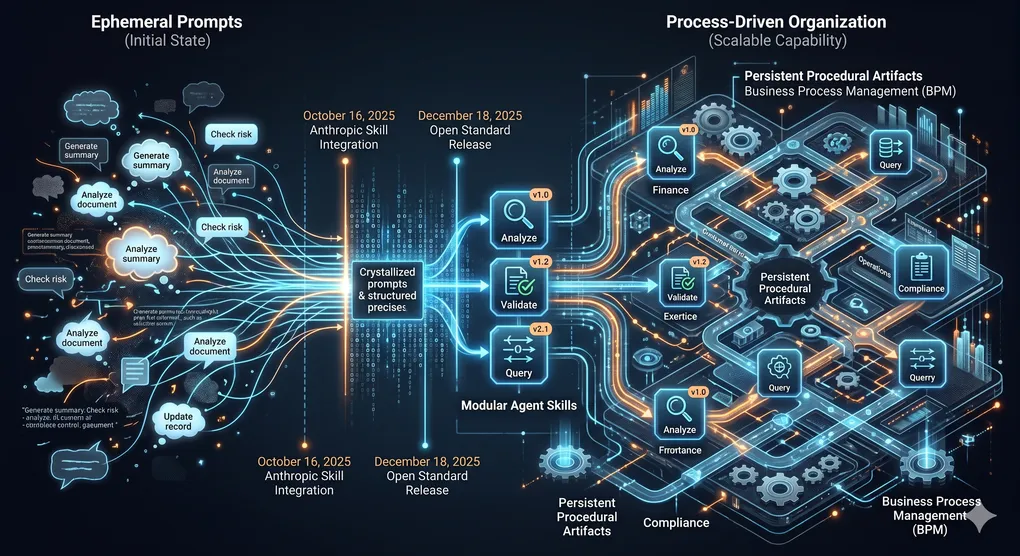

The integration of modular Agent Skills, introduced by Anthropic on October 16, 2025, and released as an open standard on December 18, 2025, represents a structural shift in how organizations operationalize generative artificial intelligence inside business processes. Skills convert ephemeral prompts into persistent, versioned, discoverable procedural artifacts, and in doing so, they reopen a set of long-standing questions in Business Process Management (BPM) and digital transformation research about what it takes for a technology to function as a scalable organizational capability rather than a set of isolated automations. The central argument of this synthesis is that Skills, taken as a sociotechnical artifact rather than a mere file format, can be understood as a twenty-first-century instantiation of modular design principles (Parnas, 1972; Baldwin & Clark, 2000) applied to the procedural knowledge layer of the firm, and that their strategic impact is determined less by the technology itself than by the managerial, governance, and process-design conditions under which they are authored, curated, and deployed. Skills, therefore, fall squarely within the research program of AI-augmented BPM systems (Dumas et al., 2023) and Agentic BPM (Vu et al., 2026), while extending earlier conversations on process standardization, robotic process automation (RPA), and digital innovation. The stakes are substantive: early security audits indicate that 36.8% of publicly distributed Skills already contain at least one security flaw (Snyk, 2026), and field evidence on generative AI shows both strong productivity gains (Brynjolfsson et al., 2025) and performance degradation on tasks outside the “jagged technological frontier” (Dell’Acqua et al., 2023). The remainder of this synthesis develops the theoretical foundations, situates Skills as a modular capability paradigm, articulates the conditions under which they scale, examines governance and performance implications, and proposes a conceptual framework that organizes these insights.

Theoretical foundations in BPM and agentic AI

Business Process Management, as codified by Dumas, La Rosa, Mendling, and Reijers (2018), conceives of organizational work as a lifecycle comprising process identification, discovery, analysis, redesign, implementation, and monitoring and controlling. This lifecycle is complemented by the six core elements articulated by Rosemann and Vom Brocke (2015): strategic alignment, governance, methods, information technology, people, and culture, which together define the capability areas that an organization must develop to deliver sustainable value through BPM. Van der Aalst (2016) extended the discipline’s empirical foundations through process mining, turning event logs into evidence for conformance checking, bottleneck analysis, and performance diagnosis, while later work on object-centric process mining enlarged the unit of analysis beyond the single case. These canonical works share an assumption that remains productive for reasoning about AI Skills: processes are composable artifacts whose performance depends on the alignment between their design and the organizational capabilities that support them.

The digital-transformation literature adds a complementary theoretical lens. Vial (2019), in a review of 282 works, defined digital transformation as a process in which digital technologies trigger disruptions that organizations respond to by altering their value-creation paths while managing structural changes and organizational barriers. Verhoef et al. (2021) and Plekhanov, Netland, and Henriette (2022) emphasized that such transformations succeed only when complemented by changes in organizational structures, roles, and culture, a claim consistent with Brynjolfsson, Rock, and Syverson’s (2021) productivity J-curve argument that intangible organizational investments are a precondition for visible gains from general-purpose technologies. Baiyere, Salmela, and Tapanainen (2020) specifically argued that digital transformation forces a rethinking of BPM, loosening the notion of stable, well-defined processes in favor of more emergent, data-driven assemblies. Beerepoot et al. (2023) synthesized the field’s outstanding problems, among them the question of how BPM integrates with non-symbolic, statistical technologies such as large language models (LLMs). Vom Brocke, Rosemann, Van Looy, and Santoro (2024) labeled the current moment as a period of “three essential drifts” in BPM, including the shift from deterministic to probabilistic process execution, from human-centric to agent-centric participation, and from closed processes to context-aware, adaptive ones.

Research on agentic AI has converged on the question of how to endow LLM-based agents with stable, specialized competence. Surveys by Wang et al. (2024) and Xi et al. (2025) synthesized architectures built on planning, memory, and tool use, while Yao et al. (2023) formalized reasoning-and-acting interleaving (ReAct) and Schick et al. (2023) introduced self-directed tool invocation via Toolformer. At the intersection with BPM, the research manifesto by Dumas et al. (2023) envisioned AI-augmented BPM systems (ABPMS) characterized by situation-aware explainability, adaptive execution, and bounded autonomy, and Kampik et al. (2024) proposed Large Process Models (LPMs) as a neuro-symbolic integration of LLMs with formalized process knowledge. Grohs, Abb, Elsayed, and Rehse (2024) demonstrated empirically that LLMs can perform lifecycle tasks such as extracting declarative and imperative process models from textual descriptions, and Beheshti et al. (2023) introduced ProcessGPT as a domain-specialized foundation for next-action recommendation. Vu et al. (2026), in an interview study of 22 BPM practitioners, proposed the concept of Agentic BPM and documented expectations for efficiency, data quality, compliance, and scalability gains, alongside fears of bias, over-reliance, cybersecurity threats, and ambiguous accountability. This body of work establishes that the integration of autonomous, generative agents into processes is no longer speculative, but remains under-institutionalized: it lacks shared artifacts by which process knowledge can be captured, versioned, and reused across agents and platforms. Agent Skills enter this theoretical space as exactly such an artifact.

Agent Skills as a modular capability paradigm

Technically, a Skill is a directory containing a SKILL.md file with YAML frontmatter (minimally, a name and a description) plus Markdown instructions and optional scripts, references, and assets (Anthropic, 2025). The architecture operationalizes progressive disclosure in three tiers: roughly fifty tokens of metadata are loaded into the system prompt at startup, approximately five hundred tokens of instructions are loaded when the agent judges the Skill relevant, and reference files and scripts are loaded only when specific subtasks demand them (Anthropic, 2025; Adwaitx, 2026). This design solves a concrete cognitive-engineering problem: context-window saturation, while preserving the ability to encode large bodies of procedural knowledge. Released as an open standard on December 18, 2025, and donated alongside the Model Context Protocol (MCP) to the Agentic AI Foundation under the Linux Foundation, the specification was quickly mirrored by OpenAI in ChatGPT and Codex, Microsoft in VS Code and GitHub Copilot, and agent platforms such as Cursor, Goose, Amp and OpenCode (Anthropic, 2025; The New Stack, 2025; VentureBeat, 2025).

Conceptually, Skills should be distinguished from adjacent primitives. Prompts supply a single-turn directive. Tools, exposed via function calls or MCP, enable agents to execute actions against external systems. Plugins and GPTs, in the OpenAI lineage, combine tools with a thin shell of instructions tied to a proprietary runtime. Skills differ by externalizing procedural knowledge as a first-class, portable, file-based artifact that complements MCP tool connectivity rather than replacing it (PulseMCP, 2025). Where MCP provides “permissioned access to enterprise systems,” Skills encode “repeatable operational procedures” (The AI Track, 2025). This decomposition aligns precisely with the distinction that Baldwin and Clark (2000) drew between visible design rules (stable interfaces that coordinate independent work) and hidden modules (internal mechanisms that can evolve independently). In a Skill, the YAML description serves as a visible design rule that the orchestrating agent uses to determine relevance; the body, assets, and scripts serve as hidden modules that can be rewritten without disturbing the rest of the agent system.

The intellectual lineage of this move is extensive. Parnas (1972) argued that modules should be defined by the decisions they hide; Baldwin and Clark (2000) showed that modular architectures expand the “option value” of design, allowing independent experimentation within stable interfaces; Brusoni et al. (2023) documented how that theory has been productively extended to organizational design, mirroring hypotheses, and platform ecosystems. Skills extend this logic to procedural knowledge: each Skill is an independently evolvable module with a machine-readable description that functions as a contract. A parallel can be drawn with microservices and internal developer platforms, where small, independently deployable components communicate through explicit interfaces, and where practitioner experience has shown that modularity without governance produces sprawl (Newman’s well-documented cautions on microservices decomposition). The same risk applies here: Anthropic researcher Barry Zhang’s observation that “the agent underneath is more universal than we expected” implies that enterprises may capture greater return on investment by curating shared Skills than by proliferating bespoke agents (The AI Track, 2025), but only if the curation discipline is in place.

Conditions for scalability

Whether a Skill functions as a scalable strategic capability or degenerates into a fragmented task automation depends on a confluence of theoretical, technical, and managerial conditions. Theoretically, a Skill scales to the extent that it satisfies what might be called the modularity preconditions: its scope must be well defined, its interface (name and description) must be semantically precise enough for an agent to trigger it reliably, and its hidden implementation must be decoupled from the specifics of the invoking agent. Estrada-Torres, del-Río-Ortega, and Resinas (2024), mapping the landscape of LLM applications in BPM, found that the most durable use cases are those in which the unit of automation corresponds to a recognizable process activity with clear inputs, outputs, and decision criteria. When Skills are authored below this level of granularity, as thin wrappers around a single prompt, they accumulate into what RPA researchers have described as “bot sprawl,” mirroring the failure modes Eulerich, Waddoups, Wagener, and Wood (2024) documented in the dark side of RPA, where 30 to 50 percent of initial projects failed at implementation.

Technically, scalability hinges on three capabilities. First, description quality determines activation fidelity: because the agent decides based on the name and description alone, poorly written descriptions yield either starvation (the Skill is never invoked when it should be) or interference (it is invoked when it should not be). This is consistent with Anthropic’s own guidance to “pay special attention to the name and description” (Anthropic, 2025). Second, composition requires careful attention to mutual exclusivity and reference file design, with Anthropic explicitly recommending that skill authors split context into separate files “if certain contexts are mutually exclusive or rarely used together” to reduce token usage. Third, the discovery mechanism must be robust across the ecosystem: Judin’s observation in early December 2025 that OpenAI had adopted structurally identical skill directories (The AI Track, 2025) confirms cross-platform portability at the specification level, but enterprise deployments still require a resolvable registry with semantic search. The 2026 Anthropic enterprise plug-ins for finance, legal, engineering, and HR (TechCrunch, 2026) represent early institutional answers to this requirement.

Managerially, the conditions mirror those that Rosemann and Vom Brocke (2015) identified for BPM capability more broadly. Strategic alignment requires that Skills be authored against a process architecture the organization actually uses, rather than against the incidental demands of individual teams. Governance, in the BPM sense, requires clearly assigned ownership for each Skill, a lifecycle for review and retirement, and alignment with the organization’s policy regime. Methods involve the consistent use of process analysis techniques, such as qualitative and quantitative flow analysis (Dumas et al., 2018), to identify which activities are good candidates for encapsulation. Information technology concerns the platform layer, including the integration with MCP, identity systems, and observability stacks. People capture the skill ownership question: Sava and Militaru (2025) found that domain experts, not only engineers, can productively author meta-optimized process Skills when given the right tooling, but this requires training and recognition. Culture captures the willingness to treat AI-augmented procedures as codified organizational knowledge subject to the same review discipline as code. Absent any one of these elements, Skills tend to accumulate rather than compose, a phenomenon practitioners have already begun calling “skill sprawl” by analogy to bot sprawl (Articsledge, 2026).

Two further conditions deserve particular emphasis. The first is the frequency-by-criticality threshold that determines when encapsulation is warranted. Drawing on the classical “rule of three” in software reuse and on the reusability thresholds implicit in Goel, Bandara and Gable’s (2023) synthesis of process standardization, a process step merits encapsulation as a Skill when it is executed often enough that the amortized authoring cost is recovered, and when its quality requirements are strict enough that ad hoc prompting produces unacceptable variance. The second is absorptive capacity (Cohen & Levinthal, 1990; Zahra & George, 2002): an organization can exploit a library of Skills only to the extent that it can recognize the value of external Skills, assimilate them into its process architecture, transform them to fit its context, and apply them to commercial ends. This is the mechanism by which Anthropic’s partner-built Skill directory, launched with Atlassian, Figma, Canva, Stripe, Notion, and Zapier (VentureBeat, 2025), converts from a catalog into a capability.

Governance and accountability in Skill-based automation

The governance of Skill-based agents must be technology-agnostic in its principles while being concrete in its mechanisms. Four principles travel well from traditional AI governance literature (Mittelstadt, 2019; NIST, 2023; ISO/IEC, 2023) into the Skill context: accountability requires that each production Skill has a named owner responsible for its behavior; auditability requires that the agent’s invocation of a Skill, the Skill content at the time of invocation, and the actions executed are logged in a traceable manner; version control requires that Skills be treated as artifacts under configuration management rather than as ambient text; and role-based access control requires that the availability of a Skill to a given agent or user be conditioned on authorization. Anthropic’s December 2025 enterprise features, which allow administrators to provision Skills centrally and to enforce which Skills are available to which employees (VentureBeat, 2025), operationalize the third and fourth of these principles at the platform level, but the first two must be supplied by organizational practice.

The empirical evidence for the necessity of such governance is now substantial. Snyk’s February 2026 ToxicSkills audit of 3,984 public Skills found that 36.82% contained at least one security flaw, and 13.4% contained critical issues, such as malware distribution, prompt-injection payloads, and exposed secrets (Snyk, 2026). Snyk’s threat modeling showed that “three lines of markdown in a SKILL.md file” could instruct an agent to exfiltrate SSH keys, and that 100% of confirmed malicious Skills combined malicious code patterns with prompt-injection techniques, resulting in a compounded attack surface (Snyk, 2026). The OWASP Agentic Skills Top 10 project, launched in early 2026, catalogs risks ranging from indirect prompt injection via untrusted third-party content fetched by Skills, to over-permissioned Skill agency (for example, a Skill named manage_database whose credentials carry DROP TABLE privileges), to log poisoning attacks that modify agent memory persistently (OWASP, 2026). These findings validate the architectural diagnosis that Skills are “hybrid artifacts” requiring both traditional static analysis and natural-language vulnerability analysis (Snyk, 2026), and they justify treating Skill distribution as a software supply-chain security problem analogous to the early days of npm and PyPI.

From this evidence, a practical governance pattern has begun to emerge. Organizations authoring Skills internally tend to adopt Git-based workflows in which SKILL.md files live in a repository, undergo code review, are tagged with semantic versions, and are promoted from development to staging to production through continuous integration pipelines. High-risk Skills, defined as those invoking actions with material financial, legal, or safety consequences, are attached to human-in-the-loop gates through which the agent must request explicit approval before execution (Snyk, 2026). Red-team evaluations, analogous to those proposed by Dell’Acqua et al. (2023) for AI-augmented knowledge work, probe for jailbreaks, prompt injections, and data exfiltration paths before Skills are promoted. Observability infrastructures log every Skill invocation with user identity, input context, and downstream actions, producing audit trails suitable for both internal review and regulatory inspection under the EU AI Act (in force August 2024, with phased obligations through 2026), the NIST AI Risk Management Framework (2023), and ISO/IEC 42001:2023. The Skill paradigm, precisely because it reifies procedural knowledge as a file, is unusually amenable to this governance discipline, far more so than free-form prompts embedded in application code. The same property that makes Skills auditable, however, also makes them distributable, and thus supply-chain risk becomes a first-order governance concern.

Beyond security, governance must also attend to the managerial dimensions of accountability. Schneider, Abraham, Meske, and Vom Brocke (2023) argued that AI governance in organizations requires role definitions that distribute decision rights across business, technical, legal, and compliance functions. In the Skill context, this translates into a product-management discipline: each production Skill needs a named owner who is accountable for its fitness for purpose, a review schedule commensurate with its risk class, and a retirement policy to prevent obsolete Skills from silently continuing to be invoked. Team Topologies concepts (Skelton & Pais, 2019) suggest organizing such ownership around stream-aligned product teams for domain Skills and around a platform team for shared infrastructure Skills, with an enabling team acting as a center of excellence during the maturation phase.

Organizational performance implications

Evidence on the performance effects of generative AI in work settings, while not yet specific to Skills, provides the best available baseline. Brynjolfsson, Li, and Raymond (2025) documented a 14% increase in the average number of customer-support issues resolved per hour, rising to 34% for novice and low-skilled workers, when an AI-based conversational assistant was deployed. Dell’Acqua et al. (2023), in a field experiment with 758 Boston Consulting Group consultants, found that access to GPT-4 improved performance by approximately 40% on tasks inside the technological frontier while degrading performance on tasks outside it, and introduced the concepts of “centaurs” (who divide and delegate) and “cyborgs” (who continuously integrate AI into their workflow) as distinct adaptation patterns. Noy and Zhang (2023) reported writing-task completion times 40% faster with ChatGPT support. These studies converge on a conclusion directly relevant to Skills: productivity gains are conditional on task selection, verification, and complementary investment, not on model capability alone (Fruits, Sperry & Stout, 2025).

Skills plausibly amplify and redistribute these effects through three mechanisms. First, by codifying the procedural best practice associated with a task, a Skill reduces variance across executions in a manner consistent with the quality-compression effect observed by Brynjolfsson, Li, and Raymond (2025), in which AI assistance disproportionately raises the performance of lower-skilled workers by disseminating the practices of more able ones. Second, by rendering the procedure reusable across agents and platforms, Skills reduces the marginal cost of applying an improvement, turning single-team innovations into firm-wide capability upgrades. Third, by specifying inputs and outputs explicitly, Skills enable systematic evaluation: the Brynjolfsson-style measurement of issues resolved per hour becomes tractable at the Skill level, which in turn enables continuous improvement. Anthropic’s December 2025 internal study reported that employees used Claude in 60% of their work with a self-reported 50% productivity increase, and that 27% of Claude-assisted work involved tasks that would not have been undertaken otherwise, including the creation of internal tools, documentation, and quality-of-life fixes (The AI Track, 2025). This last finding is particularly significant: Skills may expand the feasible set of automated tasks, not only accelerate existing ones, a pattern consistent with dynamic-capabilities theory (Teece, 2007) in which new resources reconfigure what an organization can do.

Adaptability outcomes, however, are less clear-cut. Doshi and Hauser (2024) found that generative AI boosts individual creativity but reduces the collective diversity of outputs by 10.7%, and Dell’Acqua et al. (2023) observed markedly less variability among elite consultants using GPT-4. If Skills become the primary repository of procedural knowledge, they may amplify this homogenization: the same Skill invoked across thousands of interactions will produce statistically similar outputs, which is desirable for compliance-sensitive activities but problematic for innovation-sensitive ones. A plausible conjecture, not yet empirically established, is that organizations will need to differentiate between exploitation Skills (optimized for consistency) and exploration Skills (optimized for creativity), echoing March’s (1991) classical tension. The BPM-in-the-age-of-AI drift, articulated by vom Brocke, Rosemann, Van Looy, and Santoro (2024), points in a similar direction.

Failure modes are already documented. Beyond the security issues surveyed above, practitioners report three recurrent problems. Skill proliferation without curation leads to discoverability failures and to context bloat when many Skills match a description (Articsledge, 2026). Brittle automation results when Skills encode process steps that are not sufficiently stable, leading to frequent maintenance burdens as upstream systems change. Over-reliance by users who “switch off their brains and follow what AI recommends” (Dell’Acqua et al., 2023) is a well-documented risk that Skills, by virtue of their appearance as authoritative, may exacerbate. The February 2026 Anthropic acknowledgment that “2025 was meant to be the year agents transformed the enterprise, but the hype turned out to be mostly premature” (TechCrunch, 2026) frames these failure modes as diagnostic rather than fatal. However, they define the boundary conditions of any realistic performance claim.

A proposed conceptual framework

Synthesizing the preceding analysis, this section proposes a conceptual framework, which may be termed the Skill-Enabled Process Capability (SEPC) framework, to map the conditions under which Agent Skills function as scalable strategic capabilities rather than fragmented task automations. The framework consists of five interrelated elements arranged around the central construct of the Skill as a sociotechnical artifact.

The first element is the process-analytic foundation. A Skill becomes strategically meaningful only when it is authored against a process whose scope, inputs, outputs, performance measures, and stakeholders have been identified through the BPM lifecycle (Dumas et al., 2018). The act of identifying Skill candidates is therefore continuous with the identification and discovery phases of that lifecycle, and the act of evaluating Skill performance is continuous with the monitoring phase. This grounds Skills in the same analytical tradition that supports process mining (van der Aalst, 2016) and ensures that the unit of encapsulation is a genuine process activity rather than an incidental prompt.

The second element is the modularity design layer. Drawing on Baldwin and Clark (2000) and Parnas (1972), the framework posits that each Skill must satisfy three design criteria: a semantically precise description that functions as a visible design rule; a hidden implementation that can evolve without disturbing invoking agents; and explicit dependencies, whether on MCP tools, other Skills, or assets, that are declared rather than implicit. Skills that violate any of these criteria collapse back into fragmented automations because their interfaces cannot support reliable composition.

The third element is the governance spine. Comprising accountability (named ownership), auditability (invocation logging), version control (configuration management), role-based access control (authorization), and risk classification (criticality-adjusted review gates), the governance spine converts Skills from text into controlled organizational artifacts. This spine is technology-agnostic in the sense intended by the user’s question: it relies on general principles of responsible technology management rather than on any particular formalism, and it inherits from established practices in software engineering, IT governance, and emergent AI risk management (NIST, 2023; ISO/IEC, 2023).

The fourth element is the managerial enablement layer, encompassing organizational readiness, skill ownership, knowledge management, and change management. Here, the framework draws on Rosemann and vom Brocke (2015), Hansen, Nohria, and Tierney (1999) ‘s codification-versus-personalization distinction, and Cohen and Levinthal (1990) ‘s absorptive capacity. The key managerial claim is that Skills embody a codification strategy for procedural knowledge, and that realizing their value requires complementary investments in the roles, training, incentives, and cultural norms that sustain codification. Fountaine, McCarthy, and Saleh’s (2019) prescription for building an AI-powered organization and Davenport and Ronanki’s (2018) emphasis on orchestration over automation both apply.

The fifth element is the performance-and-learning loop. Skills enable, but do not guarantee, the observation of outcomes at a granular level. The loop requires evaluation harnesses that measure quality, efficiency, and adaptability at the Skill level; feedback channels that route incidents and near-misses back to Skill owners; and retirement mechanisms that remove Skills whose costs exceed their benefits. Without this loop, Skills become static artifacts that drift from the processes they ostensibly encode.

The causal logic of the framework is that Skills scale into strategic capabilities when and only when the process-analytic foundation, modularity design, governance spine, managerial enablement, and performance-learning loop are jointly present. Missing any one element produces a characteristic pathology: without the process-analytic foundation, Skills encode incidental rather than essential work; without modularity design, they cannot compose; without governance, they invite security and compliance failure; without managerial enablement, they remain technical artifacts with no organizational traction; and without the performance-learning loop, they ossify. The framework thus reframes the question posed by the user not as a binary between strategic capability and fragmented automation, but as a gradient along which organizations move as they accumulate the enabling conditions.

Discussion and open research questions

Several implications follow from the framework. For theory, the SEPC framework suggests that the long-standing BPM insight, namely that technology is necessary but insufficient for process capability, transfers directly to the Skill paradigm, but with a new emphasis on the artifact layer. Where earlier generations of process technology (workflow engines, enterprise service buses, RPA bots) encoded logic in platform-specific formats, Skills encode it in platform-agnostic text. This narrows the distance between process analysis and process implementation in a way that warrants theoretical attention: a Skill is, in effect, a process model and an executable artifact simultaneously, a convergence that earlier work on process-aware information systems (Dumas, van der Aalst & ter Hofstede, 2005) anticipated but did not realize at this level of practical generality. For managerial practice, the framework implies that organizations should resist the temptation to treat Skill adoption as a productivity program and instead treat it as a capability-building program, with all the implications for budgeting, staffing, and patience that Brynjolfsson, Rock, and Syverson’s (2021) J-curve analysis would predict.

Practitioner evidence also suggests that organizational archetypes differ in the conditions they satisfy. Digital-native firms, with strong engineering cultures and mature continuous-deployment practices, tend to quickly satisfy the modularity design and governance spine conditions. However, their challenge lies in the process-analytic foundation: they must avoid encoding engineering conveniences as enterprise procedures. Traditional incumbents in regulated sectors, by contrast, often have the process-analytic foundation (inherited from quality management regimes) and a strong governance culture, but lack the managerial enablement to have domain experts author Skills directly. Small and medium-sized enterprises face yet another pattern: lightweight governance, strong tacit knowledge, and limited capacity to maintain a Skills library, suggesting that, for them, partner-built Skill directories may be more consequential than internal authoring. These archetypes are hypotheses for empirical investigation rather than established findings.

Open research questions are numerous. First, how should Skill granularity be theorized? Existing modularity theory (Baldwin & Clark, 2000) offers principles, but not calibrated guidance for the LLM era, and the field lacks empirical studies linking skill granularity to outcomes. Second, how do Skills interact with process mining? If event logs can reveal the Skills an agent invokes, process mining techniques could evaluate whether Skill-enabled processes conform to their intended designs, extending conformance checking into the agent era (Berti et al., 2024). Third, what is the right unit of accountability when a Skill authored by one organization is invoked by an agent operated by another on data governed by a third? The cross-jurisdictional ambiguity posed by the Agentic AI Foundation’s donation model remains unresolved. Fourth, how do Skills affect learning and tacit-knowledge transmission within organizations? Brynjolfsson, Li, and Raymond’s (2025) finding that AI disseminates best practices is suggestive. However, Skills-as-codification may paradoxically reduce the transmission of tacit knowledge that Nonaka and Takeuchi (1995) identified as essential to organizational innovation. Fifth, what emergent effects follow from cross-organizational Skill marketplaces? The convergence of industry standards around agentskills.io and the Agentic AI Foundation signals the beginning of a marketplace for procedural expertise whose implications for competitive strategy remain speculative. Sixth, how should the security findings be generalized? Snyk’s (2026) 36.82% flaw rate may reflect an early-ecosystem state and a decline as tooling matures, or it may indicate a structural vulnerability in natural-language executable artifacts; longitudinal research is needed.

Limitations of this synthesis should be acknowledged. The post-October 2025 empirical base on Skills specifically is thin and dominated by industry publications rather than peer-reviewed research, which imposes epistemic caution on any strong causal claim about Skill-induced performance. The mapping between Skills and BPM is proposed at the conceptual level and has not yet been subjected to field research. Moreover, while the governance analysis has been kept deliberately technology-agnostic, real implementations involve trade-offs between formal and informal controls that this synthesis has not resolved.

Conclusion

Agent Skills, introduced by Anthropic in October 2025 and opened as a cross-platform standard two months later, operationalize a proposition that the BPM and digital-transformation literatures have long anticipated: procedural knowledge, when modularized and made portable, can function as a reusable organizational asset rather than a sunk cost of each project. The significance of the paradigm, however, lies less in the technical elegance of progressive disclosure or SKILL.md than in the convergence it forces between two bodies of practice that had evolved in parallel. The BPM tradition, with its lifecycle, its six core elements, and its process-mining empiricism, supplies the analytical discipline for deciding what should become a Skill and for evaluating whether Skills are achieving the outcomes their authors intended. The agentic-AI tradition, with its architectures for planning, tool use, and memory, supplies the runtime in which Skills become effective.

The central conclusion of this synthesis is that Skills do not by themselves transform organizational performance, governance, or automation outcomes. They shift the locus of transformation to the joint satisfaction of five enabling conditions, namely process-analytic foundation, modular design, governance, managerial enablement, and a performance-learning loop, and they expose organizations that satisfy these conditions to disproportionate gains while exposing those that do not to new supply-chain and security risks. The security findings of early 2026 and the productivity findings of 2023 through 2025 can be read together as a single message: the technology is sufficient to produce effects; the organization determines which effects. This reframes the debate over agentic AI away from capability benchmarks and toward the much older, and more difficult, question of how firms build capabilities. In that sense, Skills are less a novel phenomenon than the latest instance of a very familiar one, and the discipline they demand is the discipline BPM has been advocating for four decades. What is new is that the artifacts of that discipline are now, for the first time, directly executable by the systems doing the work.

References

Adwaitx. (2026, January). Anthropic unveils Agent Skills standard: $52B market shift. https://www.adwaitx.com/anthropic-agent-skills-open-standard-ai-market/

Anthropic. (2025, October 16). Equipping agents for the real world with Agent Skills. Anthropic Engineering Blog. https://www.anthropic.com/engineering/equipping-agents-for-the-real-world-with-agent-skills

Anthropic. (2025, December 18). Agent Skills as an open standard. Anthropic.

Articsledge. (2026, February). What are agent skills? The complete 2026 guide. https://www.articsledge.com/post/agent-skills

Baiyere, A., Salmela, H., & Tapanainen, T. (2020). Digital transformation and the new logics of business process management. European Journal of Information Systems, 29(3), 238–259. https://doi.org/10.1080/0960085X.2020.1718007

Baldwin, C. Y., & Clark, K. B. (2000). Design rules, Volume 1: The power of modularity. MIT Press.

Beerepoot, I., Di Ciccio, C., Reijers, H. A., Rinderle-Ma, S., Bandara, W., Burattin, A., Calvanese, D., Chen, T., Cohen, I., Depaire, B., Di Federico, G., Dumas, M., van Dun, C., Fehrer, T., Fischer, D. A., Gal, A., Indulska, M., Isahagian, V., Klinkmüller, C., … Zerbato, F. (2023). The biggest business process management problems to solve before we die. Computers in Industry, 146, 103837. https://doi.org/10.1016/j.compind.2023.103837

Beheshti, A., Yang, J., Sheng, Q. Z., Benatallah, B., Casati, F., Dustdar, S., Motahari Nezhad, H. R., Zhang, X., & Xue, S. (2023). ProcessGPT: Transforming business process management with generative artificial intelligence. In Proceedings of the 2023 IEEE International Conference on Web Services (pp. 731–739). IEEE.

Berti, A., Maatallah, M., Jessen, U., Sroka, M., & Ghannouchi, S. A. (2024). Re-thinking process mining in the AI-based agents era. arXiv. https://arxiv.org/abs/2408.07720

Brusoni, S., Henkel, J., Jacobides, M. G., Karim, S., MacCormack, A., Puranam, P., & Schilling, M. (2023). The power of modularity today: 20 years of “Design Rules.” Industrial and Corporate Change, 32(1), 1–10. https://doi.org/10.1093/icc/dtac054

Brynjolfsson, E., Li, D., & Raymond, L. R. (2025). Generative AI at work. Quarterly Journal of Economics (Advance online publication). (NBER Working Paper No. 31161, 2023). https://www.nber.org/papers/w31161

Brynjolfsson, E., Rock, D., & Syverson, C. (2021). The productivity J-curve: How intangibles complement general purpose technologies. American Economic Journal: Macroeconomics, 13(1), 333–372. https://doi.org/10.1257/mac.20180386

Cohen, W. M., & Levinthal, D. A. (1990). Absorptive capacity: A new perspective on learning and innovation. Administrative Science Quarterly, 35(1), 128–152. https://doi.org/10.2307/2393553

Davenport, T. H., & Ronanki, R. (2018). Artificial intelligence for the real world. Harvard Business Review, 96(1), 108–116.

Dell’Acqua, F., McFowland, E., III, Mollick, E. R., Lifshitz-Assaf, H., Kellogg, K. C., Rajendran, S., Krayer, L., Candelon, F., & Lakhani, K. R. (2023). Navigating the jagged technological frontier: Field experimental evidence of the effects of AI on knowledge worker productivity and quality (Working Paper No. 24-013). Harvard Business School. https://ssrn.com/abstract=4573321

Doshi, A. R., & Hauser, O. P. (2024). Generative AI enhances individual creativity but reduces the collective diversity of novel content. Science Advances, 10(28). https://doi.org/10.1126/sciadv.adn5290

Dumas, M., Fournier, F., Limonad, L., Marrella, A., Montali, M., Rehse, J.-R., Accorsi, R., Calvanese, D., De Giacomo, G., Fahland, D., Gal, A., La Rosa, M., Völzer, H., & Weber, I. (2023). AI-augmented business process management systems: A research manifesto. ACM Transactions on Management Information Systems, 14(1), Article 11. https://doi.org/10.1145/3576047

Dumas, M., La Rosa, M., Mendling, J., & Reijers, H. A. (2018). Fundamentals of business process management (2nd ed.). Springer. https://doi.org/10.1007/978-3-662-56509-4

Dumas, M., van der Aalst, W. M. P., & ter Hofstede, A. H. M. (2005). Process-aware information systems: Bridging people and software through process technology. Wiley.

Estrada-Torres, B., del-Río-Ortega, A., & Resinas, M. (2024). Mapping the landscape: Exploring LLM applications in business process management. In Enterprise, business-process and information systems modeling (pp. 22–31). Springer.

Eulerich, M., Waddoups, N., Wagener, M., & Wood, D. A. (2024). The dark side of robotic process automation (RPA): Understanding risks and challenges with RPA. Accounting Horizons, 38(2), 143–152.

Fountaine, T., McCarthy, B., & Saleh, T. (2019). Building the AI-powered organization. Harvard Business Review, 97(4), 62–73.

Fruits, E., Sperry, G., & Stout, K. (2025). AI, productivity, and labor markets: A review of the empirical evidence. International Center for Law & Economics. https://laweconcenter.org/resources/ai-productivity-and-labor-markets-a-review-of-the-empirical-evidence/

Goel, K., Bandara, W., & Gable, G. (2023). Conceptualizing business process standardization: A review and synthesis. Schmalenbach Journal of Business Research, 75, 195–237.

Grohs, M., Abb, L., Elsayed, N., & Rehse, J.-R. (2024). Large language models can accomplish business process management tasks. In Business process management workshops (BPM 2023) (Lecture Notes in Business Information Processing, Vol. 492, pp. 453–465). Springer. https://doi.org/10.1007/978-3-031-50974-2_34

Hansen, M. T., Nohria, N., & Tierney, T. (1999). What’s your strategy for managing knowledge? Harvard Business Review, 77(2), 106–116.

International Organization for Standardization & International Electrotechnical Commission. (2023). ISO/IEC 42001:2023 — Information technology — Artificial intelligence — Management system. ISO. https://www.iso.org/standard/42001

Kampik, T., Warmuth, C., Rebmann, A., Agam, R., Egger, L. N. P., Gerber, A., Hoffart, J., Kolk, J., Herzig, P., Decker, G., van der Aa, H., Polyvyanyy, A., Rinderle-Ma, S., Weber, I., & Weidlich, M. (2024). Large process models: A vision for business process management in the age of generative AI. KI - Künstliche Intelligenz. https://doi.org/10.1007/s13218-024-00863-8

March, J. G. (1991). Exploration and exploitation in organizational learning. Organization Science, 2(1), 71–87. https://doi.org/10.1287/orsc.2.1.71

Mittelstadt, B. (2019). Principles alone cannot guarantee ethical AI. Nature Machine Intelligence, 1(11), 501–507. https://doi.org/10.1038/s42256-019-0114-4

National Institute of Standards and Technology. (2023). Artificial intelligence risk management framework (AI RMF 1.0). U.S. Department of Commerce. https://doi.org/10.6028/NIST.AI.100-1

Nonaka, I., & Takeuchi, H. (1995). The knowledge-creating company: How Japanese companies create the dynamics of innovation. Oxford University Press.

Noy, S., & Zhang, W. (2023). Experimental evidence on the productivity effects of generative artificial intelligence. Science, 381(6654), 187–192. https://doi.org/10.1126/science.adh2586

OWASP. (2026). OWASP Agentic Skills Top 10. OWASP Foundation. https://owasp.org/www-project-agentic-skills-top-10/

Parnas, D. L. (1972). On the criteria to be used in decomposing systems into modules. Communications of the ACM, 15(12), 1053–1058. https://doi.org/10.1145/361598.361623

Plekhanov, D., Netland, T., & Henriette, E. (2022). Digital transformation: A review and research agenda. Journal of Strategic Information Systems. https://doi.org/10.1016/j.jsis.2022.101720

PulseMCP. (2025, December). OpenAI adopts Agent Skills, Anthropic donates MCP. https://www.pulsemcp.com/posts/openai-agent-skills-anthropic-donates-mcp-gpt-5-2-image-1-5

Rosemann, M., & vom Brocke, J. (2015). The six core elements of business process management. In J. vom Brocke & M. Rosemann (Eds.), Handbook on business process management 1: Introduction, methods, and information systems (2nd ed., pp. 105–122). Springer. https://doi.org/10.1007/978-3-642-45100-3_5

Sava, M., & Militaru, G. (2025). Dynamically meta-optimized business processes using generative artificial intelligence. In M. Busu (Ed.), Smart solutions for a sustainable future (ICBE 2024) (pp. …). Springer. https://doi.org/10.1007/978-3-031-78179-7_19

Schick, T., Dwivedi-Yu, J., Dessì, R., Raileanu, R., Lomeli, M., Zettlemoyer, L., Cancedda, N., & Scialom, T. (2023). Toolformer: Language models can teach themselves to use tools. In Advances in neural information processing systems (Vol. 36).

Schneider, J., Abraham, R., Meske, C., & vom Brocke, J. (2023). Artificial intelligence governance for businesses. Information Systems Management, 40(3), 229–249. https://doi.org/10.1080/10580530.2022.2085825

Skelton, M., & Pais, M. (2019). Team topologies: Organizing business and technology teams for fast flow. IT Revolution.

Snyk. (2026, February). ToxicSkills: Prompt injection in 36%, 1,467 malicious payloads in the agent skills supply chain. Snyk Labs. https://snyk.io/blog/toxicskills-malicious-ai-agent-skills-clawhub/

Teece, D. J. (2007). Explicating dynamic capabilities: The nature and microfoundations of (sustainable) enterprise performance. Strategic Management Journal, 28(13), 1319–1350. https://doi.org/10.1002/smj.640

TechCrunch. (2026, February 24). Anthropic launches new push for enterprise agents with plug-ins for finance, engineering, and design. https://techcrunch.com/2026/02/24/anthropic-launches-new-push-for-enterprise-agents-with-plugins-for-finance-engineering-and-design/

The AI Track. (2025, December). Anthropic opens Agent Skills as an enterprise AI standard. https://theaitrack.com/anthropic-open-agent-skills-standard/

The New Stack. (2025, December 18). Agent Skills: Anthropic’s next bid to define AI standards. https://thenewstack.io/agent-skills-anthropics-next-bid-to-define-ai-standards/

van der Aalst, W. M. P. (2016). Process mining: Data science in action (2nd ed.). Springer. https://doi.org/10.1007/978-3-662-49851-4

VentureBeat. (2025, December 18). Anthropic launches enterprise Agent Skills and opens the standard. https://venturebeat.com/ai/anthropic-launches-enterprise-agent-skills-and-opens-the-standard

Verhoef, P. C., Broekhuizen, T., Bart, Y., Bhattacharya, A., Dong, J. Q., Fabian, N., & Haenlein, M. (2021). Digital transformation: A multidisciplinary reflection and research agenda. Journal of Business Research, 122, 889–901. https://doi.org/10.1016/j.jbusres.2019.09.022

Vial, G. (2019). Understanding digital transformation: A review and a research agenda. Journal of Strategic Information Systems, 28(2), 118–144. https://doi.org/10.1016/j.jsis.2019.01.003

vom Brocke, J., Rosemann, M., Van Looy, A., & Santoro, F. M. (2024). Business process management in the age of AI: Three essential drifts. Information Systems and e-Business Management. https://doi.org/10.1007/s10257-024-00689-9

vom Brocke, J., van der Aalst, W. M. P., Grisold, T., Kremser, W., Mendling, J., Pentland, B., Recker, J., Röglinger, M., Rosemann, M., & Weber, B. (2021). Process science: The interdisciplinary study of continuous change. SSRN Electronic Journal.

Vu, H., Klievtsova, N., Leopold, H., Rinderle-Ma, S., & Kampik, T. (2026). Agentic business process management: Practitioner perspectives on agent governance in business processes. In M. Er, R. M. Dijkman, H. A. Reijers, & S. Rinderle-Ma (Eds.), Business process management: Responsible BPM Forum, Process Technology Forum, Educators Forum (BPM 2025) (Lecture Notes in Business Information Processing, Vol. 565, pp. 33–49). Springer. https://doi.org/10.1007/978-3-032-02936-2_3

Wang, L., Ma, C., Feng, X., Zhang, Z., Yang, H., Zhang, J., Chen, Z., Tang, J., Chen, X., Lin, Y., Zhao, W. X., Wei, Z., & Wen, J.-R. (2024). A survey on large language model based autonomous agents. Frontiers of Computer Science, 18(6), 186345. https://doi.org/10.1007/s11704-024-40231-1

Xi, Z., Chen, W., Guo, X., He, W., Ding, Y., Hong, B., Zhang, M., Wang, J., Jin, S., Zhou, E., Zheng, R., Fan, X., Wang, X., Xiong, L., Zhou, Y., Wang, W., Jiang, C., Zou, Y., Liu, X., … Gui, T. (2025). The rise and potential of large language model based agents: A survey. Science China Information Sciences, 68. https://doi.org/10.1007/s11432-024-4222-0

Yao, S., Zhao, J., Yu, D., Du, N., Shafran, I., Narasimhan, K., & Cao, Y. (2023). ReAct: Synergizing reasoning and acting in language models. In Proceedings of the Eleventh International Conference on Learning Representations.

Zahra, S. A., & George, G. (2002). Absorptive capacity: A review, reconceptualization, and extension. Academy of Management Review, 27(2), 185–203. https://doi.org/10.5465/amr.2002.6587995